Almost human? How Azure Cognitive Services speech sounds more like a real person

Blog|by Mary Branscombe|8 November 2018

Speech recognition systems are getting more powerful. They’re most accurate with a good close-up microphone and some advanced knowledge of the vocabulary likely to be used on specific topics – as with the PowerPoint Presentation Translator, which creates a transcription of what the presenter says while they’re going through a deck of slides and translates into multiple languages – or when the system doesn’t have to work in real-time – like the transcription in Azure Stream, which takes about an hour to process a 30 minute video and can even recognise different speakers.

Similar options are available to developers in the Azure Cognitive Services speech APIs with Speech-to-Text (which includes customisable speech models for specific vocabularies), Speaker Recognition (which covers both identification and verification) and Speech Translation. Combine those with the language services that can recognise the point of what someone is trying to say: if they’re asking for details of flights, which sentence is about their destination and which is the day they want to fly? There’s also a Cognitive Services Speech SDK that includes speech to text, speech translation and intent recognition in C# (on Windows because it needs UWP or .NET Standard), C/C++ (on both Windows and Linux), Java (for Android and other devices) and Objective C, if you want to use speech recognition in native apps rather than JavaScript.

But just being able to understand speech isn’t enough to build real-time interactive speech systems. If users can talk to your application, it might need to be able to talk back, confirming that what they say has been recognised, or even hold a conversation with the user to extract information. If a customer tells a travel agent virtual assistant they want to fly to New York in early December, the assistant would need to ask them if they wanted JFK, Newark or La Guardia, as well as where they were flying from, and to tell them the price for the different flight options.

Currently the Azure Cognitive Services Text to Speech API can convert text to audio in multiple languages in close to real-time, saving the audio as a file for later use. There are more than 75 voices to choose from in 49 languages and locales (like different variants of English for the US, UK and Australia), with male and female voice and parameters developers can adjust to control speed, pitch, volume, pronunciation and extra pauses.

Microsoft’s new deep learning text to speech system, demonstrated at the Ignite conference recently, will support the same 49 languages and customisation options for developers who want to build their own voices, but while it’s in private preview it has just two pre-built voices in English, Jessa and Guy.

The problem with computer generated text is that it can be tiring to listen to, because it just doesn’t sound right; it’s acceptable for short utterances from a virtual assistant telling you the weather forecast or confirming the timer you just set, but it’s often not natural or engaging enough for something you’re listening to for a long time, like an audio book, because there just isn’t enough expression to make it easy to listen. Voice navigation systems would be clearer and easier to understand if the directions sounded less like a computer too.

It’s a hard problem. Human-like speech has to get the phonetics right, so each phoneme, syllable, word and phrase is pronounced correctly and articulated clearly. But to avoid sounding monotonous and robotic, it’s almost important to put in the correct patterns of stress and intonation – known as prosody – to put the stress on the right syllable in a word, make different syllables the right length and put the right pauses in the right place as the different parts that make up speech are synthesised into a computer voice.

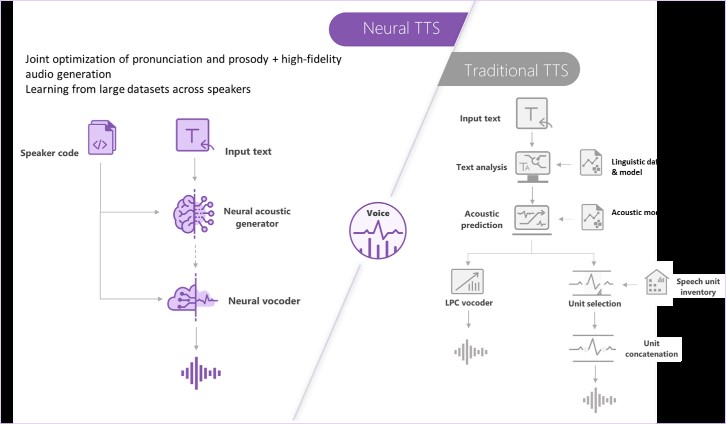

Most text to speech systems split the process of putting the stress in the right place into several different steps. Analysis of the text in conjunction with a linguistic data model is one step, followed by a separate step of predicting the correct prosody with a different acoustic model; the output from those two steps is fed into a vocoder while the different units of speech are selected from a standard inventory of speech sections and joined together, which can cause glitches and discontinuities. The learning model for that can over-smooth the differences between sounds, making the generated speech sound muffled or buzzy rather than clear and expressive.

The recent improvements in speech recognition and translation have come from using deep neural networks and that’s what the new neural text to speech API does.

The difference between the preview Cognitive Services neural text to speech and more traditional approaches that split the problem into more stages. Source: Microsoft

Neural text to speech combines the stages of synthesising the voice and putting the stresses in the right places in the words, so it can optimise pronunciation, prosody, generating high-quality audio for the generated speech together. Instead of using multiple different models at different stages, it learns from large data sets of speech by many different speakers and uses that end to end machine learning model for both the neural network acoustic generator that predicts what the prosody should be in the speech and the neural network vocoder that takes that prosody and generates the speech.

That produces a more natural voice that Microsoft calls nearly indistinguishable from recorded human voices. You can compare the voices yourself in these audio files for three different sentences.

“The third type, a logarithm of the unsigned fold change, is undoubtedly the most tractable.”

“As the name suggests, the original submarines came from Yugoslavia.”

“This is easy enough if you have an unfinished attic directly above the bathroom.”

If you want to do real-time speech generation, Azure offers streaming speech served from Azure Kubernetes Service; that lets you scale it out as necessary for your workloads, and you can call both the new neural text to speech and the traditional text to speech APIs if you need to cover more languages, from the same endpoint.

The private preview of neural text to speech is currently available by application; fill out the form at http://aka.ms/neuralttsintro to explain how you plan to use the service.

Contact Grey Matter to discuss Azure and Cognitive Services: +44 (0)1364 654100 or if you require technical advice.

Contact Grey Matter

If you have any questions or want some extra information, complete the form below and one of the team will be in touch ASAP. If you have a specific use case, please let us know and we'll help you find the right solution faster.

By submitting this form you are agreeing to our Privacy Policy and Website Terms of Use.

Author

Mary Branscombe

Independent Writing and Editing Professional at Freelance

Mary is a technology journalist with over three decades of experience, regularly contributing to CIO.com, The New Stack, Computer Weekly, The Stack, AskWoody and more. She specialises in Microsoft, authoring two books on Windows 8 and one O'Reilly book on Azure AI services with writing partner, Simon Bisson.

Related News

GitHub’s billing model is getting an update

GitHub is introducing a new pricing and billing model for Copilot designed to reflect how organisations actually use AI today. As Copilot evolves from a simple AI assistant to a more agentic platform, usage patterns have changed dramatically. In response, GitHub is creating a pricing model that...

Modernise with the new Embarcadero Migration and Upgrade Centre

In software development, standing still is rarely an option. Platforms evolve, security threats grow more sophisticated, and user expectations continue to rise. Yet many development teams are still running older versions of their tools – often because upgrading feels risky, time-consuming or complex. ...

We’re now a Kiteworks reseller partner

We’re excited to announce that we’re now a Kiteworks reseller partner to help you improve your file sync and sharing experiences, as well as improve security best practices. “Having Kiteworks as a partner enables us to deliver secure, enterprise-grade Managed File Transfer, File Share and Collaboration, and...

See you at the International Cyber Expo

Tue 29 September 2026 - Tue 30 June 2026 10:00 am - 5:00 pm BST

We’re exhibiting at the International Cyber Expo We’re excited to share that we’ve got stand at the International Cyber Expo at Olympia, London, for the very first time. 29-30 September 2026. You’ll find us on stand K60, where our team will be ready...