Microsoft Cognitive Services: Computer Vision API

Blog|by Jamie Maguire|15 November 2018

Introduction

In the last article, we looked at the Face API and explored some of the rich functionality it offers, and we have also seen how the ride sharing firm Uber implemented and deployed a solution built on this technology to improve security of both passengers and drivers.

In this article, we explore another image recognition API that belongs to the Cognitive Services family – the Computer Vision API.

What can you do with the Computer Vision API?

The Computer Vision API ships with a rich feature set, at the time of writing, the API contains the following functionality that allows you to build solutions that can:

- Tag images based on content

- Categorise images

- Identify the type and quality of images

- Detect human faces and return their coordinates

- Recognise domain-specific content

- Generate descriptions of content

- Use OCR (optical character recognition) to identify printed text found in images

- Recognise text

- Distinguish between colour schemes

- Flag adult content

- Crop photos to be used as thumbnails

With such a rich set of features, the API can be deployed in many use cases and industries. Consuming the API is straightforward, and like other API endpoints that belong to the Cognitive Services ecosystem, Microsoft has made it easy to integrate and consume this API.

With just a few lines of code, you can integrate the API with your existing software applications using a language of your choice such as C#, JavaScript, Node or Python. There are a few constraints in terms of the image size and file formats but these aren’t really showstoppers.

Image Tagging

Currently, the API can identify tags for over 2000 recognisable objects, living beings, scenery and actions. A collection of image tags then goes onto form the basis for the overall image “description” which gets displayed as human readable language. For example, consider the following image:

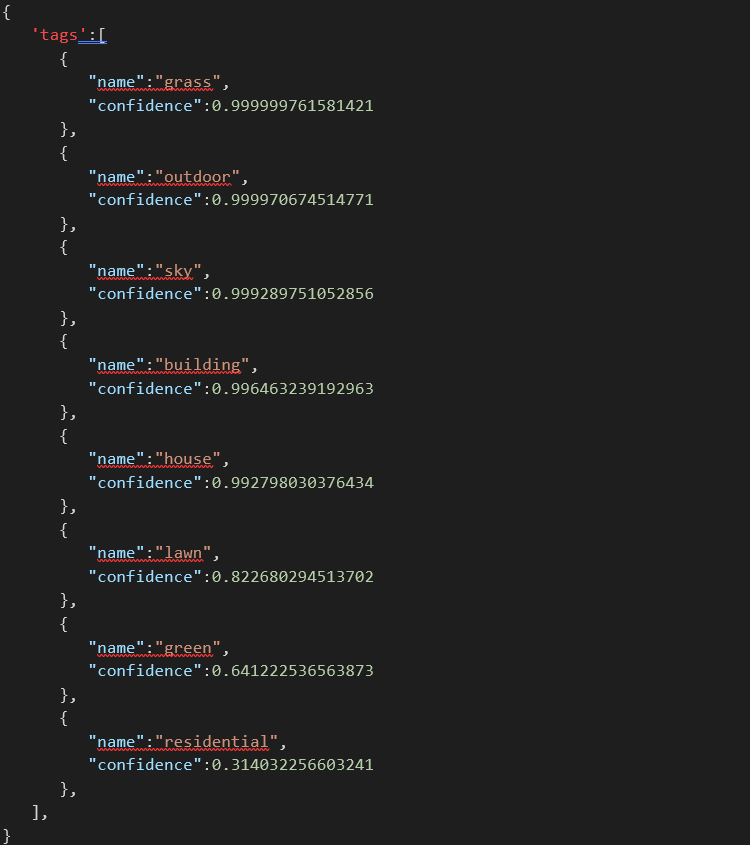

When you supply this image to the Computer Vision API endpoint (which can be done by uploading the binary or sending the image URL), it returns the following JSON:

In this JSON response, you can see the API has been able to identify the key elements that form part of the image such as:

- Grass

- Building

- House

- Lawn

The API has even been able to identify with accurate confidence (0.99), that this image has been taken outside (the label “outdoor”).

When using the Image Tagging endpoint, it’s important to mention that tags don’t belong to a hierarchy. For example, car, lorry and bus aren’t classified as belonging to the “motorised vehicle group”.

If classifying images into groups is functionality you need, however, there is an endpoint for that which brings us onto the next topic.

Image Categorisation

Classifying images is no easy feat. In fact, a whole research initiative was launched in the last 10 years that united academics and computer science experts from around the world. One of the tasks at the heart of this initiative was to build a training dataset that represented real-life objects.

AI experts would submit their algorithms and datasets as part of an annual challenge to see which one could identify objects with the best accuracy. This dataset went on to be known as ImageNet.

Winners from the first intake of the ImageNet challenge moved into senior roles at firms like Baidu, Google, Huawei and so on and it can be said that ImageNet has been one of the main drivers for AI-based image classification than can be seen today!

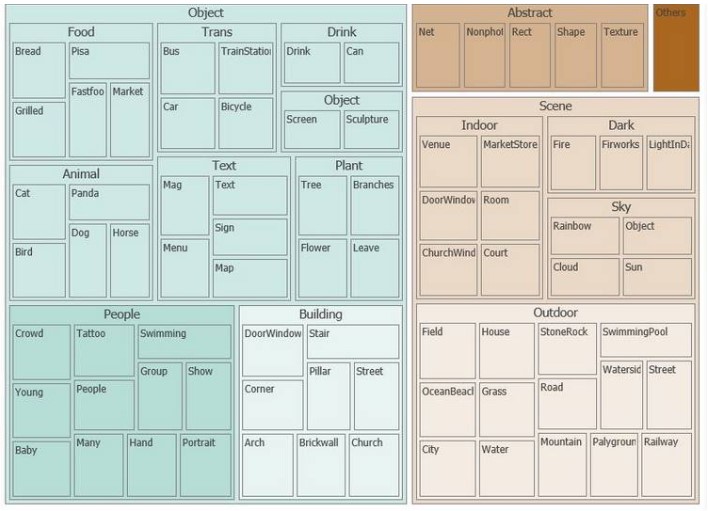

With the Computer Vision API, you don’t need to write complex algorithms to group or classify images. And unlike the Image Tagging endpoint, the Image Categorisation endpoint will categorise an image into a group (or taxonomy) that belongs to a parent/child relationship.

You can see an example of some of these in the image below. You can also find the entire list of categories here.

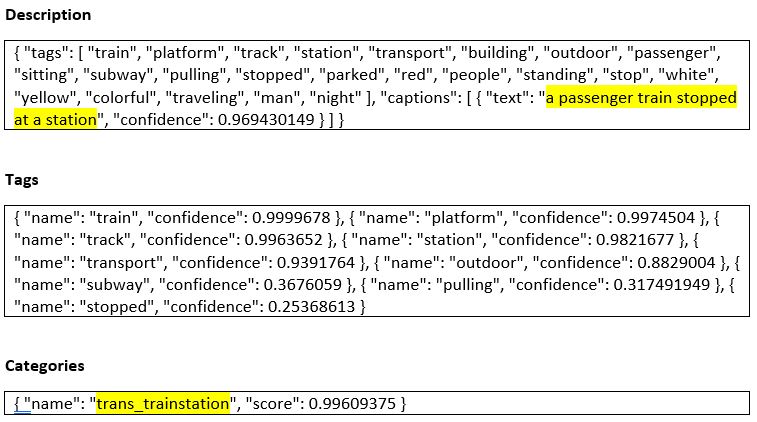

Consider this image of a train in the London Underground:

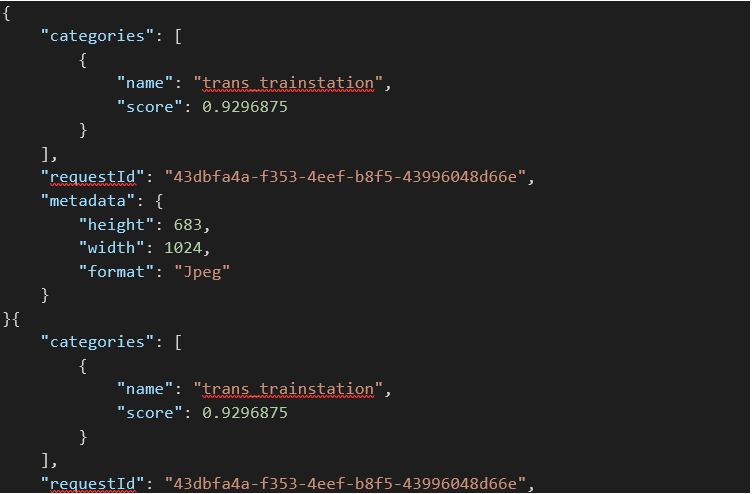

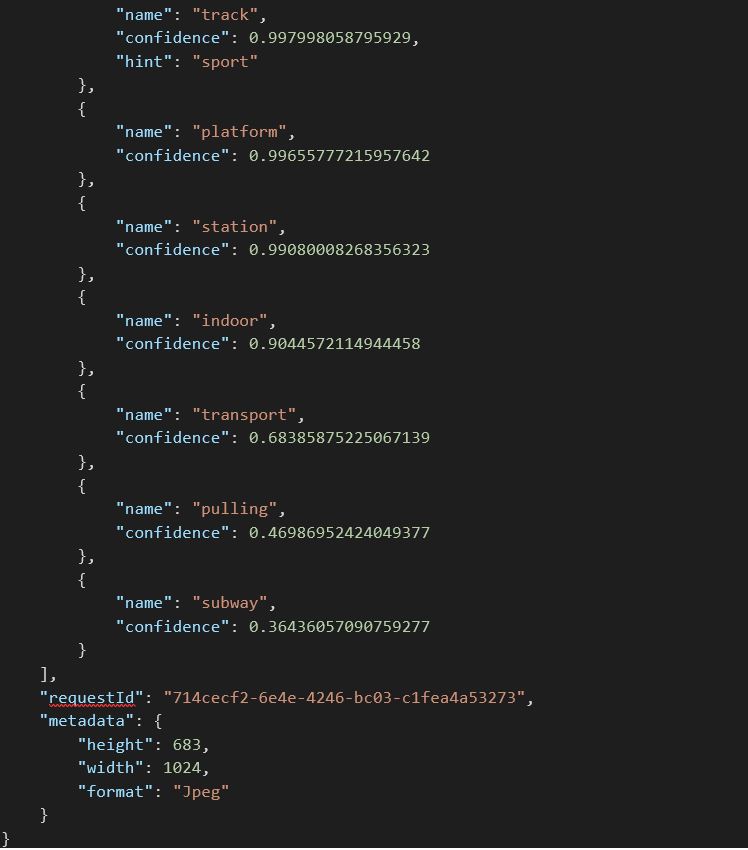

When this image is passed to Classification endpoint, the following JSON with accompanying confidence scores are returned:

Looking at the JSON, you can see the API has been able to successfully identify a suitable Description, related Tags and has assigned the Category of trans_trainstation!

There are also plenty of other relevant attributes associated with the image such as subway, track, red people and so on.

Video Analysis

One of the most exciting features (in my opinion!) is the ability for the API to analyse videos in real-time. The API can do this by capturing frames within a movie clip which are then passed on for processing to identify Tags, Descriptions and other valuable attributes.

You can also pass these frames onto additional Cognitive Services API endpoints such as the Face API to surface more actionable insights!

Invoking the API

Like the other Cognitive Services, there are a few ways you can consume the Computer Vision API. First of all, you’ll need a set of API keys. You can easily create these in Azure, just opt for the free pricing model (F0) if you only want to experiment. This plan caps the number of requests you can make to 20 per minute (or 5,000 per month) which is sufficient to get started with.

Postman

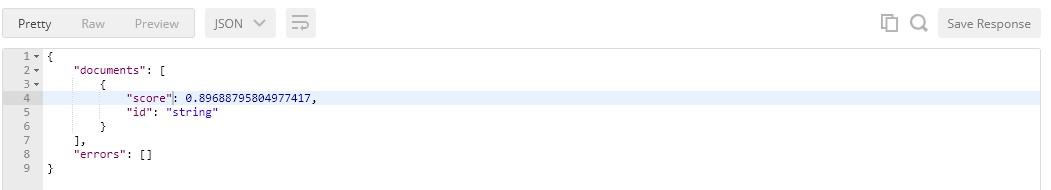

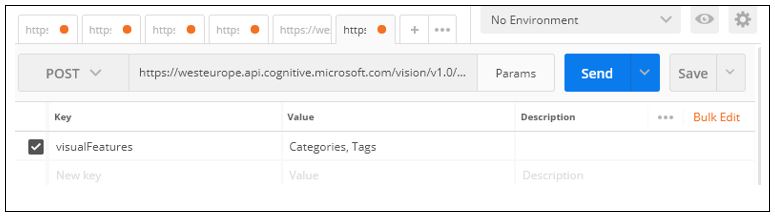

If you want to quickly test the endpoints and get a feel for the parameters, the requests you can make and the responses you’ll receive, then Postman is a great tool you can use. In this example, you can see I’ve built a request in Postman that accepts a URL (to our London Underground Train image) which gets passed onto the Computer Vision API endpoint:

Using Postman to build a request. Source: Postman

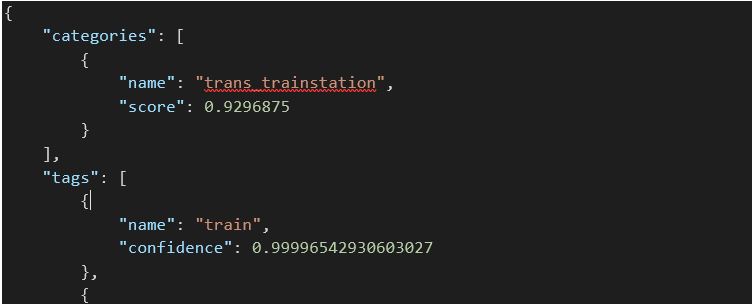

It’s important to note that your API key gets added to the Header and the image URL you want the API to process gets added the JSON Body. When this gets processed by the API, the following JSON response is returned by the API and the image has been categorised as trans_trainstation.

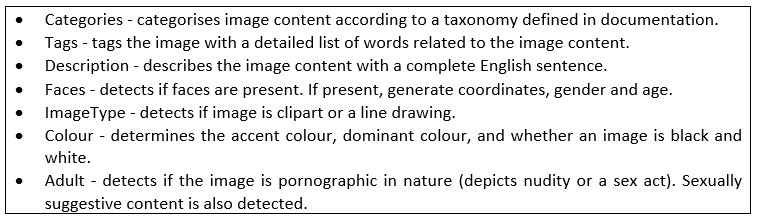

You can further enrich the data returned from the API by specifying values for the VisualFeatures parameter. For reference, here is a complete list of the features the API can return along with some descriptions of what each one means:

You can specify Categories and Tags for the VisualFeatures parameter like this:

You can specify Categories and Tags for the VisualFeatures parameter like this:

… then process the same image again. This time, the API will return additional data in the JSON response. Ideal if you need to further classify or map data to your application!

By now I think you get the idea! Naturally, you can consume the API in a language of your choice and if you’re using Visual Studio you can also leverage the Visual Studio Connected Services extension which makes it even easier to consume the API.

Closing Thoughts

We only touched on some of the features of this API and I see a lot of use cases for it. Some of these include:

- real-time processing of components in manufacturing plants to help with fault detection

- analysing torrents of social media data to help surface actionable insights

- help improve security systems, ID recognition

- …and many more

These sample use cases aside, I believe some of the biggest benefits of this API can be realised when it’s fused with other APIs in the Cognitive Services ecosystem. For example, by integrating the Text Analytics API, you could overlay an extra layer of intelligence to image tags that are being identified, thereby giving further insights.

You might decide to house the Computer Vision API in a Windows Service that automatically processes photos, scanned in invoices or receipts to help automated elements of your business or workflows.

That’s is for now, in the next article, we’ll look at how you can use some of the Cognitive Services APIs to find insights in social media data!

If you have questions about integrating the Cognitive Services APIs into your application or for more information for your use case, contact the Grey Matter services team for an obligation-free discussion: +44 (0)1364 654 100.

Contact Grey Matter

If you have any questions or want some extra information, complete the form below and one of the team will be in touch ASAP. If you have a specific use case, please let us know and we'll help you find the right solution faster.

By submitting this form you are agreeing to our Privacy Policy and Website Terms of Use.

Author

Jamie Maguire

Software Architect at Microsoft for Startups

Jamie is one of our Vendor Marketing Manager, specialising in mapping. He oversees several key vendors, including HERE Technologies, Azure Maps, TomTom and Adobe. During his time as a Marketing Manager across diverse roles, he's specialised in crafting compelling stories, leveraging digital tools for maximum impact.

Related News

Microsoft Agent 365 – AI agents tailored to your business

We’ve just launched our new four-part video series exploring Agent 365 and the rise of AI agents inside Microsoft Copilot. You’ll learn what AI agents are, why they matter, and how to start using them within the Microsoft ecosystem. Each episode focuses on real-world use cases,...

AI in software development: from simple coding to agentic engineering

Software development is undergoing a major change in the way developers work with and create code. AI in software development has moved beyond the novelty of “look what it can generate” and into something even more useful: agentic engineering. That shift doesn’t remove developers...

Microsoft 365 is getting a price update – here’s what’s changing

Microsoft has announced a global pricing update, coming into effect for new purchases and renewals from 1 July 2026. For many businesses, this means higher licence costs – but it also creates opportunities to review, optimise, and in some cases, reduce overall spend. Microsoft 365...

Grey Matter partners with CrowdStrike to expand cyber security portfolio

Ashburton, Devon, UK – 29 April 2026 – We’ve expanded our cyber security portfolio with the addition of the CrowdStrike Falcon® cyber security platform, bringing AI-native protection to help you consolidate cyber security tools, reduce complexity, and stop breaches. As...