Language Understanding Intelligence Service – LUIS

Blog|by Jamie Maguire|13 July 2018

Introduction

For machines, understanding human language can be difficult. This is down to many reasons, for example, the order of the words can impact the overall meaning of sentence, or someone might be expressing an opinion with sarcasm or irony.

Alternatively, other words in a sentence may add no real value to it, these are often referred to as Stop Words or Noise Words. In Natural Language Processing (NLP) algorithms, it’s common to remove such words during a pre-processing stage to optimise the NLP processing. Cleansing and pre-processing data is only one small part of the problem however and there are other challenges to consider, for example:

- How can the machine identify the underlying intent behind the message?

- How can the machine identify if people are talking about specific things (entities)?

Understanding human language and POS Tagging

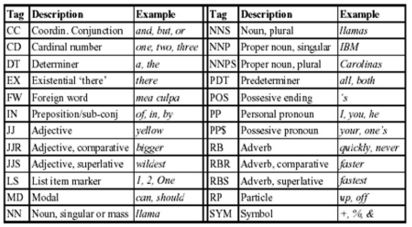

POS (Part of Speech) Tagging is one technique that can help address some of these challenges to help the machine understand the written word. It involves assigning a ‘tag’ or ‘category’ – in the form of a short code to each word in a sentence. In the English language, some common POS categories are:

- nouns

- verbs

- adjectives

- adverbs

You can find numerous POS Tagging datasets online; a popular set is the Penn Treebank POS Tag Set. In the image below, you can see an extract of this:

By running a sentence through a POS Tagger such as the Stanford Tagger, you can identify the main tags in a sentence. For example, consider the following sentence:

“I’m think I’m going to get a new bike”

After being ran through the Stanford Tagger, the following is returned

I/FW think/NN I’m/NN going/VBG to/TO get/VB a/DT new/JJ car/NN

When you have these tags, you can look for patterns that help give you further insight into the text you’re dealing with. I have done quite a bit of this while working on a project with Twitter. In the screenshot below, you can see a sample record I had been working with:

In that project, I was looking to identify specific signals in Twitter data, or to be more precise, to surface users in Twitter that were expressing commercial intent.

Despite being able to identify the POS tags, the challenges of understanding the intent in each tweet was still there!

LUIS to the rescue!

The Microsoft Cognitive Services ecosystem features a service called LUIS – the Language Understanding Intelligence Service. LUIS has effectively democratised complex NLP algorithms and lowered the barrier to entry for developers that need to leverage NLP technology.

Using LUIS, you can build natural language understanding solutions to help you identify information in sentences (Utterances). The service can interpret the user’s underlying Intent, and is also able to extract valuable information from sentences in the form of Entities.

The language model is powered by machine learning so is continually learning which means LUIS can process data that it might not have been trained with previously. Ideal if you need to process data at scale!

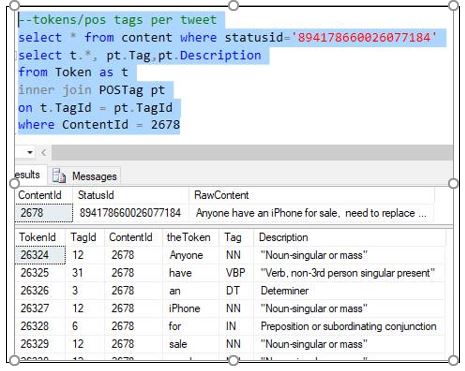

Intents, Utterances

Intents, Utterances and Entities form the main pillars of your LUIS application. You can configure these from the LUIS Dashboard. In the dashboard, you add one or more Intents to your LUIS application, multiple Utterances can then be added to each Intent which further helps LUIS understand the types of sentences it can expect to deal with.

For example, an Intent could be Sales.Lead, this Intent could have multiple Utterances such as:

- I would like a new phone

- I might get a new phone

In the screenshot below, you can see the Sales.Lead Intent with the respective Utterances that represent the potential purchase of a new phone:

If incoming text is close enough to either of these Utterances, it will be categorised as belonging to the Sales.Lead Intent.

If incoming text is close enough to either of these Utterances, it will be categorised as belonging to the Sales.Lead Intent.

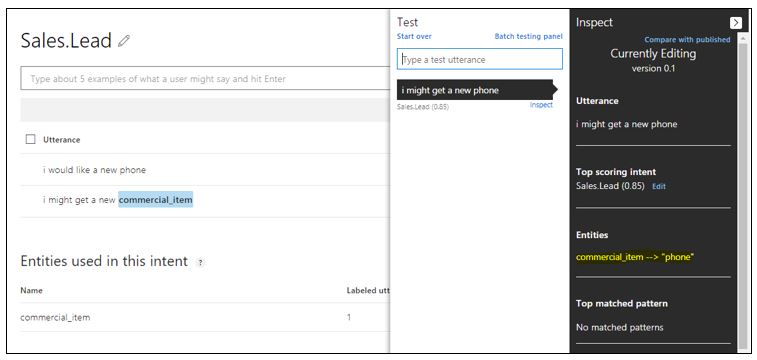

After you’ve trained LUIS with your Intents and Utterances, you can then test the language model through an easy to use interface in the dashboard. It also features an Inspection Window which breaks down the Utterance, the Top Scoring Intent and the presence of any Entities. You can see this in the screenshot below:

In the above image, you can also see that two sentences have been supplied in the Test window:

In the above image, you can also see that two sentences have been supplied in the Test window:

- I might get a new phone

- I should get a new phone

Both have been identified as belonging to our Intent Sales.Lead which is great, but more importantly, we didn’t even train LUIS with the phrase “I should get a new phone”. Despite this, LUIS has still been able to identify the underlying Intent! (albeit with a slightly lower confidence scoring of 0.63).

Enrich Language Understanding with Entities

You can further enrich the data LUIS is able to identify in Utterances by defining elements of the sentence where Entities are present. For example, in the following Utterance, we can say the word phone is the Entity:

I might get a new phone

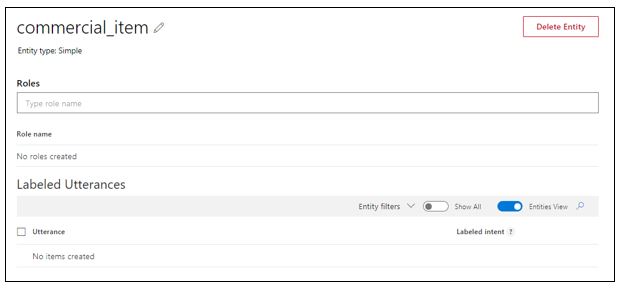

Again, using the LUIS dashboard, you create an Entity and give it a description. In this example, I’ve called it commercial_item.

You then highlight the specific section of the Utterance where the Entity is present and set this. You can see this here:

You then highlight the specific section of the Utterance where the Entity is present and set this. You can see this here:

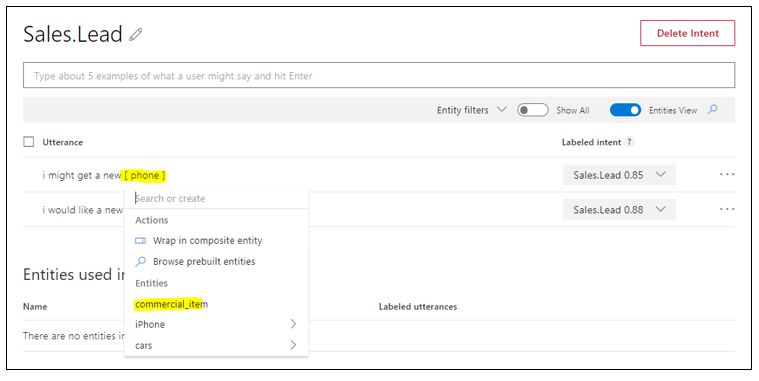

After you’ve done this, you’ll notice the original Utterance has changed and the word phone has now been replaced with commercial_item. The “Entities used in this intent” section also updates to reflect the recent changes which you can see in the screen shot below:

After you’ve done this, you’ll notice the original Utterance has changed and the word phone has now been replaced with commercial_item. The “Entities used in this intent” section also updates to reflect the recent changes which you can see in the screen shot below:

You can then re-run the original test from the LUIS dashboard and supply the same text as before (I might get a new phone). This time, LUIS has identified the presence of an Entity and its type:

You can then re-run the original test from the LUIS dashboard and supply the same text as before (I might get a new phone). This time, LUIS has identified the presence of an Entity and its type:

Having access to this kind of data lets you build more innovative solutions, can help you surface further data insights or even drive business rules in your application.

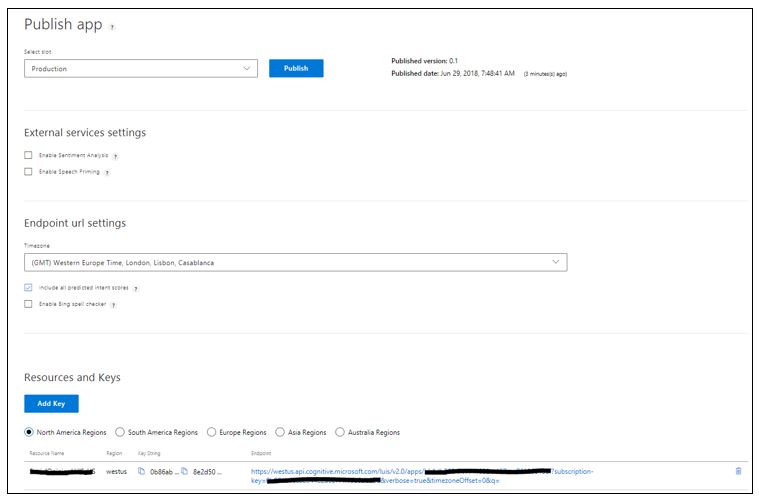

Publishing

Configuring your LUIS application through the web dashboard is only one part of the puzzle. After you’ve built and trained your LUIS application, you need to publish it. Publishing your LUIS application is straightforward and can be done from the dashboard which you can see here:

When you’ve done this, you get a REST endpoint which you can then use to integrate with your existing software!

Testing and Integrating the Endpoint

I’ve used Postman to construct the request that invokes the LUIS REST endpoint we’ve just published, you can see the parameters that are being sent here – note the query “I might get a new phone”.

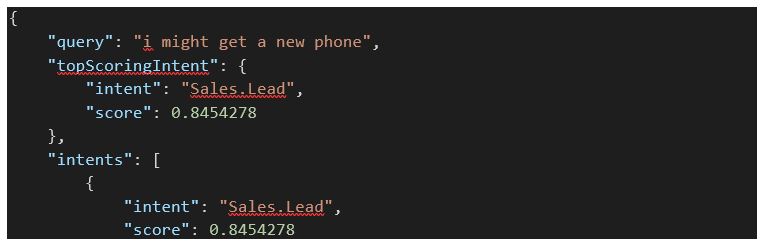

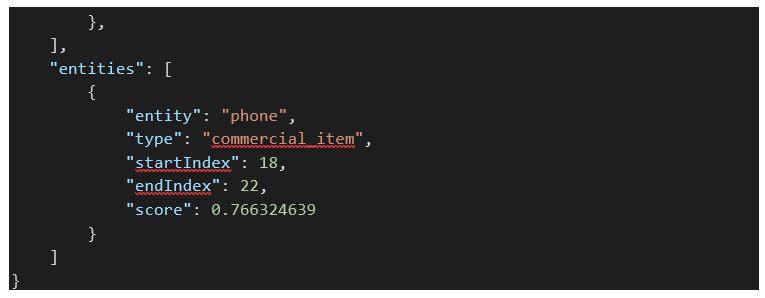

When this request is sent to the LUIS application, the following JSON is returned. Here you can see in the intent, confidence scoring, the entity/type and some additional entity metadata.

When this request is sent to the LUIS application, the following JSON is returned. Here you can see in the intent, confidence scoring, the entity/type and some additional entity metadata.

When you have the data in this format, you can use a library like Json.NET to deserialise it into your existing object model, thereby allowing you to integrate NLP functionality into your software application with minimal fuss!

When you have the data in this format, you can use a library like Json.NET to deserialise it into your existing object model, thereby allowing you to integrate NLP functionality into your software application with minimal fuss!

Summary

In this blog post, we’ve looked at some of the challenges you might experience when building software that needs to process human language. We’ve looked at how LUIS offers solutions to some of these challenges, we’ve explored some of the features that LUIS has and seen how easy it is to integrate LUIS with your existing applications. With businesses such as UPS adopting LUIS, it’s a product you might want to check out!

Are you using LUIS in any of your software products or services?

The Grey Matter Managed Services team are experts at managing Azure-related projects, including Cognitive Services. You can contact them on +44 (0)1364 654100 to discuss Azure and Visual Studio options and costs, or if you require technical advice.

Contact Grey Matter

If you have any questions or want some extra information, complete the form below and one of the team will be in touch ASAP. If you have a specific use case, please let us know and we'll help you find the right solution faster.

By submitting this form you are agreeing to our Privacy Policy and Website Terms of Use.

Author

Jamie Maguire

Software Architect at Microsoft for Startups

Jamie is one of our Vendor Marketing Manager, specialising in mapping. He oversees several key vendors, including HERE Technologies, Azure Maps, TomTom and Adobe. During his time as a Marketing Manager across diverse roles, he's specialised in crafting compelling stories, leveraging digital tools for maximum impact.

Related News

GitHub’s billing model is getting an update

GitHub is introducing a new pricing and billing model for Copilot designed to reflect how organisations actually use AI today. As Copilot evolves from a simple AI assistant to a more agentic platform, usage patterns have changed dramatically. In response, GitHub is creating a pricing model that...

Modernise with the new Embarcadero Migration and Upgrade Centre

In software development, standing still is rarely an option. Platforms evolve, security threats grow more sophisticated, and user expectations continue to rise. Yet many development teams are still running older versions of their tools – often because upgrading feels risky, time-consuming or complex. ...

We’re now a Kiteworks reseller partner

We’re excited to announce that we’re now a Kiteworks reseller partner to help you improve your file sync and sharing experiences, as well as improve security best practices. “Having Kiteworks as a partner enables us to deliver secure, enterprise-grade Managed File Transfer, File Share and Collaboration, and...

See you at the International Cyber Expo

Tue 29 September 2026 - Tue 30 June 2026 10:00 am - 5:00 pm BST

We’re exhibiting at the International Cyber Expo We’re excited to share that we’ve got stand at the International Cyber Expo at Olympia, London, for the very first time. 29-30 September 2026. You’ll find us on stand K60, where our team will be ready...